HUMANS RULE

An update: How Human Cognition Will Define the AI Era

March 2026: I’m continuing to update this article based on my explorations, new learnings and insights. Recently a lot of thoughts and insights have come from Michael Pollan’s “A World Appears”, OODA, and Antonio Damasia’s work. Jump in!

listen to this as a podcast ☝️

There’s a lot of noise right now about AGI coming for your job. Every week brings another headline about AI agents that can reason, plan, and execute autonomously. But buried in the hype is a fundamental misunderstanding about what AI actually does well — and what it doesn’t.

And here’s the strategic risk no one talks about: professionals who outsource their judgment to these systems don’t just become less effective. They risk obsolescence — by neglecting the very faculties that make them indispensable.

Here’s the truth: AI is, in a meaningful sense, one-dimensional. If I ask a question like “What’s the reimbursement code for MedDevice Procedure ABC?” it will do a phenomenal job getting me the answer. “2234.” It matches the words in my question to the words in its database using similarity scores — how close is what I asked to what the database answer is. Simple question, simple retrieval. Done.

My brain works in a similar fashion for simple recall. I can search my memory for a matching pattern and retrieve the answer. But here’s where humans and machines diverge dramatically: make the question complex.

The Multi-Dimensional Human

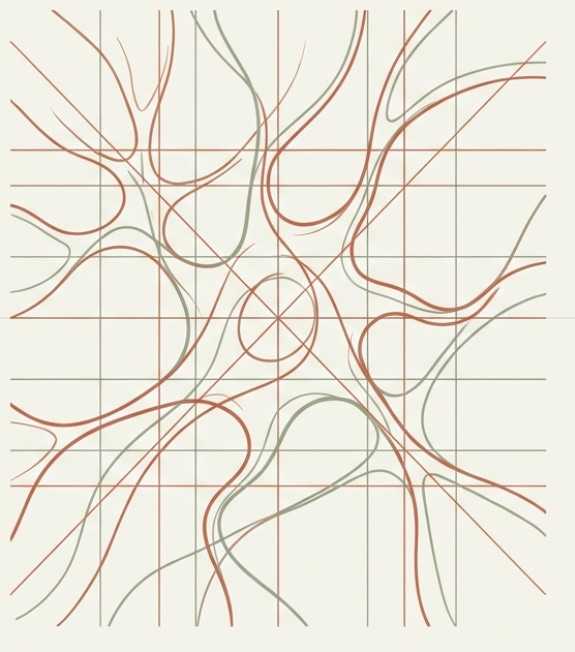

Humans process and integrate something AI fundamentally cannot: the subtleties and intangibles of emotion, reasoning, scenarios, and outcomes that exist between the facts.

Think of a question and answer as the foundation. Now overlay it with emotions, relationships, political dynamics, gut feelings, contextual cues, and imagined futures. These aren’t words in a database. They’re something else entirely — subjective, experiential, and deeply contextual. They are the ethereal glue between the facts, the stuff that cannot be stored as canonical entries in any system.

And here’s what most people get wrong: that glue isn’t noise. It isn’t bias to be engineered out. It’s infrastructure.

Neuroscientist Antonio Damasio spent decades studying patients who had damage to the ventromedial prefrontal cortex — the brain region that connects emotional processing to decision-making.¹ These patients were remarkable: their IQs were normal. Their memory was intact. Their analytical reasoning tested perfectly fine. By every cognitive measure, they should have been excellent decision-makers.

They were catastrophically bad at it.

Without the ability to generate what Damasio calls somatic markers — the body’s felt signals that tag options with emotional valence based on prior experience — these patients couldn’t decide. They’d deliberate endlessly over trivial choices. They’d make ruinous financial and personal decisions. They could analyze options perfectly but couldn’t feel which ones mattered. Rationality without emotion isn’t pure logic. It’s paralysis.

This is the mechanism behind the “ethereal glue.” Somatic markers are the body’s compressed summary of what happened last time you were in a situation like this one. They’re embodied predictions — approach or avoid, lean in or pull back, speak up or stay quiet — that arrive as felt sensations before conscious reasoning even begins. They are the gut feelings that guide choices, and Damasio’s research shows they are essential, not detrimental, to rational decision-making.

AI has no somatic markers. It has no body that has been in the world, accumulated consequences, and encoded them as felt states. It processes all options with equal analytical weight because it has no emotional history to tag some options as “this feels right” and others as “something’s off.” This isn’t a limitation that more parameters or bigger context windows will fix. It’s a limitation of architecture.

How are these things captured and reflected in AI? They aren’t. Not really.

AI is extraordinary at identifying statistical patterns in data and presenting them to us. But humans are extraordinary at recognizing patterns — meaningful ones, the kind that require lived experience, emotional intelligence, and situational judgment to interpret. We don’t need AI to explain patterns to us. We need it to present them richly enough that our own pattern recognition can engage with them.

This distinction — between statistical retrieval and experiential recognition — matters more than any technical benchmark. AI matches words to words. The human mind matches patterns to possibilities.

Your Brain Is Not a Camera. It’s a Prediction Engine.

Lately, I’ve been reading Michael Pollan’s A World Appears and some of his insights resonate really well with the ideas in this paper.² To understand why human cognition is so fundamentally different from AI, you have to understand what your brain is actually doing when you “perceive” the world. The answer, according to the Bayesian Brain hypothesis, is something far more sophisticated than passive observation.

Your brain doesn’t receive reality and then react to it. It generates predictions about what’s going to happen and then checks those predictions against incoming sensory data. Perception isn’t reception — it’s inference. Every moment, your brain is running an internal model of the world, projecting forward, and then updating when reality diverges from the expectation. Neuroscientists call this minimizing prediction error: the brain constantly narrows the gap between what it expects and what it encounters.

This means that expertise — real, deep expertise — is fundamentally about the quality of your internal predictions. An expert doesn’t just know more facts than a novice. They have better priors: more refined internal models that generate more accurate predictions about what’s going to happen next in their domain. The veteran sales rep walks into a meeting and already knows how it’s likely to unfold before the first handshake. The experienced surgeon senses something is off before the monitors confirm it. Their brains aren’t waiting for data. They’re anticipating it.

And crucially, somatic markers are the delivery mechanism for these predictions. When your predictive model detects a mismatch — when something in the environment doesn’t fit the pattern — the signal doesn’t arrive as an analytical readout. It arrives as a feeling. A tightening in the gut. A prickle of unease. A sudden alertness you can’t quite explain. The body marks the prediction error with emotional urgency before the conscious mind has time to reason through it. This is what gives predictions their motivational force — without the somatic marker, the prediction error might register cognitively but fail to trigger action.

Here’s a simple illustration Pollan makes: A hiker sees a dark shape in the woods. Their brain doesn’t wait to collect all available visual data before responding. It immediately generates a prediction — “bear” — and the somatic marker system triggers a cascade of physiological responses. Heart rate spikes. Muscles tense. Adrenaline surges. But as the hiker gets closer, more sensory input arrives, and the brain detects a prediction error. The shape doesn’t move. The texture is wrong. The model updates rapidly: not a bear. A boulder. The somatic alarm is dismissed. This has ties to OODA, which we will shortly visit.

This happened in milliseconds. No conscious deliberation. No analytical framework. Just a predictive model, marked by felt urgency, updating itself in real time against new evidence.

AI doesn’t work this way. An LLM processes a prompt, retrieves statistically probable responses, and generates output. It doesn’t maintain a running internal model of the situation. It doesn’t anticipate. It doesn’t experience prediction error. And it certainly doesn’t feel the significance of a mismatch. It calculates similarity — every time, from scratch, with no felt sense of what’s coming next.

This predictive architecture also explains something critical about how human consciousness works. According to Global Neuronal Workspace Theory, our brains consist of numerous specialized modules — processing vision, memory, emotion, spatial reasoning, language — all running simultaneously and unconsciously. (An agent swarm?) These modules compete for entry into conscious awareness in what amounts to a Darwinian process. Only the most relevant, urgent, or surprising signals break through to the surface.

And somatic markers play a critical role in this competition. They are one of the primary mechanisms by which signals gain urgency — by which a particular prediction error gets flagged as important enough to interrupt whatever else the conscious mind is doing. The gut feeling is literally the body’s way of saying: pay attention to this one.

This is a profound advantage over AI: human consciousness is a relevance filter, powered by embodied emotional signals that prioritize what matters right now. AI models, by contrast, tend to process all variables with equal statistical weight. They don’t know what matters right now. They know what correlated with outcomes in the training data. The difference is enormous, and it’s the difference between a system that retrieves information and one that understands context.

This predictive, filtering architecture — the brain as a Bayesian engine running inside a competitive workspace — is what makes human cognition irreplaceable. It’s not just that we process information differently from AI. We process reality differently. We’re not computing similarity scores against a corpus. We’re generating predictions about a living, unfolding world, and updating those predictions faster than any system can calculate.

In strategic terms, this is the “Orient” phase of John Boyd’s OODA loop — Observe, Orient, Decide, Act (also, remember the bear from above?).³ The human who can update their internal model faster than the situation changes holds the advantage. AI can help you observe. But the orientation — the interpretation, the reframing, the felt sense of what the new information means — that’s where human cognition dominates.

Thirty Seconds in the Operating Room

Now let me show you what all of this looks like in practice — what it feels like from the inside when a predictive brain, loaded with years of experiential priors and sharpened by somatic markers, encounters a moment that matters.

A medical device sales rep is standing in the OR. The surgical team is running through the pre-procedure discussion — reviewing the case, confirming the plan, making sure everyone is aligned. It’s routine. The surgeon mentions, almost in passing, that the patient has an iodine allergy. It’s an offhand comment, a small data point in a flood of pre-operative information.

But the rep catches it.

Not because anyone asked him to screen for allergies. Not because a checklist flagged it. Not because an algorithm surfaced a risk score. He catches it because his predictive model — his Bayesian engine, tuned by years of experience — registers a prediction error. Something doesn’t fit. And the signal doesn’t arrive as a thought. It arrives as a feeling — a somatic marker, a flash of embodied unease that cuts through the ambient noise of the pre-op conversation and pushes itself into conscious awareness. Iodine allergy plus this specific product equals a problem. His body knew before his mind could articulate why.

He speaks up. The team pauses. They consider the implications. And they prevent what could have been a serious adverse event.

Now ask yourself: what system could have caught that?

The allergy was mentioned verbally, in passing, during a live conversation. The product’s iodine content is buried in technical specifications that the surgeon had no reason to cross-reference in that moment. The connection between these two facts existed in exactly one place: the rep’s experiential pattern library — the cognitive structure built over years of being in rooms like this one, absorbing context that no database captures, and encoding the consequences of past situations as somatic markers that fire automatically when the pattern recurs.

This is the lived, embodied dimension of cognition — what philosophers call qualia, the felt quality of conscious experience. It’s not just that the rep had information about iodine. It’s that he was there, in the room, immersed in the sensory and social reality of the situation. He could read the surgeon’s tone. He could feel the tempo of the pre-op conversation. He could sense the weight of the moment. All of that context — processed through his body’s somatic marker system — shaped which signals his brain surfaced and which it suppressed.

And here’s what’s critical: the somatic marker is what made him act. Knowing that the product contains iodine is cognitive. Feeling that this particular mention of an iodine allergy, in this particular room, at this particular moment, requires immediate intervention — that’s embodied. Without the felt urgency, the analytical recognition alone might not have been enough to make him interrupt a surgeon in a room full of professionals. The feeling gave the knowledge its force.

No AI system in the world could have made that connection in that moment. Not because the data didn’t exist, but because the situation in which the data mattered could not have been anticipated, structured, or indexed in advance. The relevance of that iodine allergy to that specific product in that specific moment was emergent — it arose from the live, unfolding, irreducibly human experience of being present in the room.

This is the kind of cognition we need to build around, not replace.

Recognition-Primed Decisions: How Experts Actually Think

In the 1980s, psychologist Gary Klein studied how firefighters and military commanders make life-or-death decisions under extreme time pressure. What he found upended the conventional model of rational decision-making.⁴

Experts don’t compare options analytically. They don’t weigh pros and cons in a mental spreadsheet. Instead, they recognize patterns instantly and know what to do. A fire commander walks into a burning building and feels that the floor is about to collapse — not because of a calculation, but because the scene matches a pattern from hundreds of previous fires encoded in their experience. Klein called this Recognition-Primed Decision making (RPD).

Klein was always somewhat vague about how recognition translates into action so quickly. Damasio provides the bridge. Somatic markers pre-filter options before conscious deliberation even begins. The firefighter doesn’t analytically determine the floor is about to collapse — his body tells him through a felt sense of danger that arrives before the thought. The pattern recognition and the somatic marker fire together as a coupled system: the recognition identifies the situation; the marker tells you what it means — approach, avoid, act now, wait.

RPD reveals something that aligns perfectly with the Bayesian Brain model: expertise isn’t about having more information. It’s about having better priors — more refined internal models that generate more accurate predictions, marked by more calibrated somatic responses. The expert’s “theory” about why they succeed is really their internal pattern library, built through thousands of cycles of prediction, correction, and embodied encoding. Each time they encounter a situation, predict an outcome, feel the result, and observe what actually happens, their model gets sharper. The firefighter who has walked into five hundred burning buildings has a predictive model of structural collapse — and a somatic vocabulary for danger — that no amount of analytical training can replicate.

That rep in the OR wasn’t running a mental algorithm. He was doing exactly what Klein’s firefighters do: recognizing a pattern so quickly that it felt like instinct. His brain generated a prediction (”this procedure will go normally”), encountered data that created prediction error (”wait — iodine allergy”), and his somatic system marked the anomaly with enough urgency to break through into conscious awareness and trigger action. The decision to speak up wasn’t the product of deliberation. It was the product of a finely tuned predictive engine, grounded in a body that had learned what matters.

The Science Behind the Instinct

Klein’s RPD framework doesn’t stand alone. It’s supported by decades of converging research on how human cognition actually works in complex, real-world environments.

Daniel Kahneman’s System 1 and System 2 framework describes two modes of thinking: System 1 is fast, automatic, and pattern-driven; System 2 is slow, deliberate, and analytical.⁵ Kahneman himself acknowledged that System 1 is heavily influenced by affect — by emotional valence. Damasio explains why: somatic markers are the mechanism through which emotion shapes fast cognition. They’re what makes System 1 smart rather than just fast. Without them, System 1 would be pattern recognition without prioritization — seeing everything but knowing the significance of nothing.

What’s striking is that most AI tools are designed to engage System 2 — they present confidence scores, analytical breakdowns, and reasoned recommendations that demand conscious evaluation. But experts operate primarily in System 1, guided by somatic markers that tell them what matters before they can explain why. They see the answer before they can articulate it and feel its rightness before they can justify it.

Here’s the critical warning for AI designers: forcing an expert to engage in System 2 thinking — requiring them to justify their intuition against an AI “confidence score” — actually degrades performance. It interrupts the fluid, automatic execution of expertise. It’s the cognitive equivalent of handing a concert pianist sheet music mid-performance. The conscious analysis breaks the very process that makes them an expert.

Gerd Gigerenzer’s research on ecological rationality goes further.⁶ His work demonstrates that simple heuristics — fast, frugal decision rules that exploit the structure of the environment — frequently outperform complex statistical models in uncertain, real-world conditions. More data and more computation don’t automatically produce better decisions. In fact, in environments with high uncertainty and sparse feedback, human intuitive judgment is often more accurate than algorithmic optimization. The expert’s gut isn’t irrational. It’s a finely calibrated instrument — somatic markers included — tuned to the structure of their specific domain.

Perhaps most directly relevant is a 2009 paper that Kahneman and Klein co-authored, “Conditions for Intuitive Expertise: A Failure to Disagree,” in which two of cognitive science’s most prominent and often-opposing thinkers agreed on a key finding: expert intuition is reliable when two conditions are met.⁷ First, the environment must have regular, learnable patterns. Second, the expert must have had sufficient practice with feedback to internalize those patterns.

This is critical because it draws a precise boundary around when human judgment works — and it maps exactly onto what a well-designed AI system can provide. Create an environment rich in patterns. Give experts repeated exposure with feedback. Their intuition — their somatic markers, their Bayesian priors, their RPD pattern libraries — becomes a precision instrument.

Which raises the question: what if we built AI systems that did exactly that?

The Architecture Most AI Gets Wrong

Most AI tools — especially in enterprise sales, healthcare, and business intelligence — try to replace human judgment with machine recommendations. They present confidence scores, bulleted action items, and prescriptive next steps. “This deal is at 85% risk. Recommended action: schedule follow-up within 48 hours.”

The problem? This approach actually slows experts down. Confidence scores and analytical breakdowns trigger System 2 thinking — the slow, effortful, serial processing that experts have learned to bypass. You’re asking a veteran firefighter to stop and read a spreadsheet while the building burns.

What if we inverted the approach entirely?

Instead of telling a sales rep that a deal is at risk, imagine showing them three similar deals side by side — the timeline progressions, actual conversation snippets, what happened next. Their brain instantly recognizes: “Oh, this is one of those deals where the champion lost internal support.” No confidence score needed. No action items required. The pattern fires, the somatic marker confirms it, and they know what to do.

The human doesn’t consume AI insights. Their pattern recognition validates which signals matter, filters noise from meaning, and continuously trains the system on what patterns are actually meaningful.

We call this Cognition-Integrated AI (CIAI): designing around human cognitive strengths rather than trying to overcome human limitations, treating human pattern recognition not as a user to serve, but as a feature to build around.

Human Cognition as System Architecture

This is the critical distinction that most AI builders miss. There’s a difference between data-driven pattern mining — where the machine finds patterns and dumps them on humans — and cognition-integrated pattern presentation, where the system and the human brain work as coupled components.

Human pattern recognition isn’t a user of the system. It’s a processing layer within the system.

The architecture looks like this: Raw data flows to agent-based pattern detection, which feeds a cognitive presentation layer, which activates the human cognition layer, which produces action and validation, which feeds back into system learning. Human cognition sits between pattern presentation and action, functioning as the validation filter, the contextual interpreter, the training signal, and the synthesis engine.

This isn’t just philosophy. It’s an engineering decision with concrete implications.

Designing for Gestalt, Not Analysis

Human pattern recognition works through gestalt perception — seeing the whole immediately, not analyzing parts sequentially. When you show someone a table comparing Deal #1’s industry, size, timeline, and discount against Deal #2, you’ve triggered analytical mode. Serial processing. Slow. Effortful. Misses subtle patterns. And crucially, it bypasses the somatic marker system entirely — you can’t feel a spreadsheet.

But when you show three deal timelines side by side with conversation snippets in parallel columns, you’ve activated the visual cortex — the parallel processor. The brain extracts commonalities without conscious effort. The rep glances at the screen and sees that every prospect who asked about pricing went quiet for two weeks, then asked about ROI, then closed within a month. No one had to explain the pattern. The brain just saw it. And if the rep has been in similar deals before, they don’t just see the pattern — they feel it. The somatic markers fire: “I’ve been here. I know how this goes.”

The design principle: present information to activate the visual cortex and temporal pattern recognition, not the prefrontal analytical cortex. Reading a table is a serial process. Seeing a pattern is a parallel one. Design for the parallel processor — and for the embodied recognition system that gives patterns their meaning.

The Magic Number Three

Show a rep fifty similar deals and they drown. Show them ten and they start comparing attributes manually. Show them three carefully selected precedents and their brain instantly extracts what matters — without conscious effort.

This isn’t arbitrary. It’s based on the limits of human working memory. Herbert Simon called this bounded rationality — the recognition that human decision-making is shaped by cognitive constraints, and that effective systems work within those constraints rather than pretending they don’t exist.⁸ The system is designed around brain architecture, not despite it.

Affordances, Not Recommendations

James Gibson’s concept of affordances — the idea that objects in an environment naturally suggest how they can be used — provides the design principle here.⁹ A doorknob affords turning. You don’t need a sign that says “turn me.”

There’s a crucial difference between a recommendation (”You should send a follow-up email”) and an affordance (showing three similar deals where follow-up happened at this point, letting the rep see what was said and what happened). When the system shows what other reps did in analogous situations — the actual messages, the actual responses, the actual outcomes — the expert’s brain completes the pattern on its own. “I’m in this situation. I recognize what to do based on seeing what others did.”

Agency preserved. Expertise built. Authenticity maintained.

The Feedback Loop That Changes Everything

Here’s the most architecturally significant piece: what humans recognize becomes what the system presents.

When a rep engages with a pattern snapshot and takes action, that pattern gets reinforced. When a rep ignores a pattern, it gets suppressed. Over time, the similarity algorithms evolve to match human recognition. The system learns that humans recognize and act on pricing negotiation patterns but ignore day-of-week correlations. Meaningful patterns surface more. Noise gets filtered out.

Human cognition becomes the adaptive filter that tunes the entire system toward what actually matters. The machine can find a million statistical correlations. Only the human can tell you which ones mean something. Human validation trains the AI to recognize “meaning” and “relevance” — not just statistical correlation.

The Training Data Problem No One Talks About

Here’s an uncomfortable truth that most AI builders ignore: what gets logged is the post-hoc rationalization, not the actual pattern recognition that happened in the moment.

A rep closes a deal and writes in the notes: “Built relationship with CFO, demonstrated ROI, competitive displacement of incumbent.” That reads like a logical sequence. But that’s not what happened. What happened was a series of pattern recognitions, somatic markers, contextual reads, and in-the-moment judgments that can never be fully captured in text. The felt sense of “this CFO is warming up” or “this deal is slipping” doesn’t make it into the CRM. The embodied experience that guided every decision in real time gets compressed into a sanitized narrative after the fact.

Think about that rep in the OR. If you asked him afterward to write up what happened, he might say: “Identified potential allergy contraindication during pre-procedure review and flagged it for the surgical team.” That’s the sanitized, rational version — the tombstone data. What actually happened was that a fragment of an offhand comment collided with a prediction error in his Bayesian model, was marked with somatic urgency by a body that had learned what danger feels like in an operating room, was surfaced by his conscious relevance filter above thousands of other signals, and synthesized into action faster than he could consciously reason through it.

The CRM entry is the tombstone. It tells you someone died. It doesn’t tell you how they lived.

For an LLM trained on these records, it learns to mimic expert outputs. It doesn’t learn why those outputs were right. It doesn’t know which features mattered. It doesn’t feel the somatic weight that made one signal urgent and another ignorable. You can’t reconstruct the event sequence that led to a decision because the rational narrative that would explain it never existed anywhere outside of the expert’s imagination. The decision came first. The explanation came after.

This is the fundamental limitation of training AI on human-generated records: you’re training on the map, not the territory.

Where AI Genuinely Wins

Intellectual honesty demands acknowledging where machines are simply better than humans.

AI excels at exhaustive search across massive datasets — finding the needle in a haystack of ten million records without fatigue or frustration. It excels at consistency, applying the same analytical rigor to the ten-thousandth case as it did to the first, without the cognitive fatigue that degrades human performance over long shifts. It excels at speed of retrieval, surfacing relevant precedents in milliseconds rather than the minutes or hours it would take a human to search their memory or a filing system. And it excels at the elimination of certain biases — it won’t anchor on a recent dramatic event or be swayed by a compelling but irrelevant narrative the way humans sometimes are.

These are genuine strengths, and pretending otherwise weakens the argument. The point isn’t that humans are better than machines. The point is that humans and machines are better at different things, and the winning architecture allocates cognitive labor to the right component.

The machine scans millions of data points. The human tells you which ones matter. The machine retrieves precedents instantly. The human tells you which precedents are actually analogous. The machine identifies statistical patterns. The human — guided by somatic markers, Bayesian priors, and situated judgment — tells you which patterns are meaningful and which are noise.

This is a coupled system. Neither component alone produces the outcomes that both together can achieve.

The Expertise Development Problem

There’s a practical consequence of AI-as-replacement that rarely gets discussed: if AI simply tells experts what to do, they never develop expertise. This is the dependency trap — and it’s a strategic risk for every organization that relies on prescriptive AI.

Consider a junior sales rep whose AI system generates prescriptive action items for every deal. They follow the recommendations, close some deals, lose others. But they never build the internal pattern library — the Bayesian priors, the somatic markers — that would make them an expert. They never develop the felt sense for when a deal is going sideways or when a prospect is ready to close. They become dependent on the system rather than augmented by it. Remove the AI and they’re helpless — a pilot who can only fly on autopilot. Functional in the routine, but helpless in the novel.

Damasio’s research makes this point with devastating clarity. His patients with damaged somatic marker circuitry could analyze perfectly. They could reason through options, evaluate evidence, and articulate logical conclusions. What they couldn’t do was decide — because they couldn’t feel which options mattered. A junior professional raised entirely on prescriptive AI faces an analogous risk: they develop analytical capabilities without the embodied, experiential foundation that makes those capabilities functional. They can process information but can’t feel its significance.

Klein’s research shows that pattern libraries are built through deliberate exposure to cases with feedback.⁴ Experts become experts by encountering situations, making predictions, feeling the results, seeing outcomes, and encoding everything — cognitive and somatic — into their internal models. Each cycle sharpens their priors. Each prediction error, marked by felt experience, refines their model. A cognition-integrated system that shows reps patterns from similar deals isn’t just a decision-support tool — it’s a training mechanism. It compresses twenty years of prediction-and-correction cycles into structured exposure, accelerating the development of genuine expertise.

In his later work, Seeing What Others Don’t, Klein explored how insight works — how experts not only recognize known patterns but discover new ones.¹⁰ The same architecture that supports recognition also supports discovery. When a rep sees three similar deals displayed together and notices a commonality that the system didn’t explicitly highlight, that’s an insight. That’s expertise developing in real time. That’s something no prescriptive AI system can produce.

Think about that OR rep again. He didn’t develop his ability to catch the iodine issue by reading a training manual or following an AI checklist. He developed it by being in the room, hundreds of times, absorbing the texture of how procedures unfold, what details matter, and how products interact with patients in the real world. Each case was another cycle of prediction, observation, embodied encoding, and model refinement. A system that accelerates that kind of exposure — showing new reps the patterns that veterans have internalized over decades — doesn’t just support decisions. It creates the next generation of experts.

The system doesn’t just help experts make better decisions today. It creates more experts for tomorrow.

Trust, Accountability, and Stakes

In high-stakes domains — medical devices in operating rooms, military command decisions, clinical trial protocols — there’s a reason we want a human in the loop that transcends cognitive architecture. It’s about moral responsibility and accountability.

When a patient has an adverse reaction, someone has to own that outcome. When a deal goes sideways and a client relationship is damaged, someone has to face that client. When a military commander’s decision costs lives, there’s a chain of responsibility that leads to a person, not an algorithm.

This isn’t a sentimental argument. It’s an institutional one. Our legal systems, regulatory frameworks, and social contracts are built around human accountability. The surgeon in that OR is responsible for that patient’s outcome. The rep who spoke up about the iodine allergy exercised judgment that carried weight precisely because it came from a person who was present, accountable, and willing to interrupt a room full of professionals based on something he felt as much as something he knew.

An AI flag in a risk dashboard doesn’t carry the same weight. It can’t read the room. It can’t gauge whether the surgeon will be receptive to the interruption. It can’t modulate its tone to match the gravity of the situation without undermining the surgeon’s authority. The rep did all of these things simultaneously, unconsciously, in real time — because that’s what humans do in high-stakes, socially complex environments. Meaning is socially constructed and situationally emergent. It requires an entity that is present — not just processing, but inhabiting the moment, feeling its weight.

As AI systems become more capable, the question of who bears responsibility for decisions becomes more urgent, not less. The human in the loop isn’t just a cognitive component. They’re the moral center of the system.

The Palantir Lesson

Consider Palantir. They don’t actually sell AI that replaces human judgment. They sell highly paid Forward Deployed Engineers — human consultants who do the deep cognitive work of understanding a customer’s domain, mapping their decision processes, and building context around their data. The engineers do the pattern recognition. The platform documents their work.

Selling “context graphs” without an army of forward-deployed engineers is selling the map without the territory. The thing you’re trying to capture doesn’t exist in the form you imagine. The patterns, the judgment, the contextual understanding — these emerge from human cognition interacting with information, not from the information itself.

“But Won’t AI Get There Eventually?”

This is the strongest counterargument, and it deserves a direct response.

Yes, AI models are becoming dramatically more capable. Multimodal models can process images, audio, and text simultaneously. Reasoning capabilities are improving. Context windows are expanding. It’s tempting to project these curves forward and conclude that everything described in this article will eventually be automated.

But this misunderstands the nature of the problem.

The challenge isn’t that AI can’t detect patterns. It’s getting better at that every day. The challenge is that meaning is context-dependent, socially constructed, and situationally emergent. The iodine allergy mattered because of that patient, with that product, in that room, at that moment. The relevance wasn’t stored anywhere. It emerged from the collision of a passing comment with a prediction error in a brain that was embedded in the living situation — and was delivered by a somatic marker that gave the prediction error its urgency and its force.

Even a perfect model that had access to every allergy record, every product spec, and every surgical protocol couldn’t have replicated what happened — because the information that mattered wasn’t in any system. It was in the air. It was in a verbal aside. It was in the gap between what was formally communicated and what was actually said.

Damasio’s work makes the architectural limitation stark: AI is cognitively what his brain-damaged patients are neurologically. It can analyze. It can reason. It can retrieve and compare. But it has no somatic markers. It has no felt sense of what matters. It processes all options with equal analytical weight because it has no body, no emotional history, no accumulated felt consequences to tag some signals as urgent and others as ignorable. Damasio’s patients could think perfectly. They just couldn’t decide. That is a precise description of AI’s relationship to judgment.

The real world generates novel situations constantly. Every OR is different. Every deal is different. Every conversation unfolds in ways that no training set could anticipate. Human cognition handles this novelty not by retrieving stored patterns but by generating new predictions on the fly — updating internal models in real time, detecting prediction errors, marking them with somatic urgency, and synthesizing new interpretations faster than any external system can calculate. This is the Bayesian engine at work, powered by somatic markers: not looking up answers, but constructing them from the collision of prior experience with present reality.

This isn’t a temporary gap waiting to be closed. It’s a fundamental difference in how humans and machines process the world. Machines process representations of reality. Humans are embedded in reality. AI computes similarity. Humans minimize prediction error. AI processes options. Humans feel which ones matter. That difference changes what’s possible.

The productive question isn’t “when will AI replace human judgment?” It’s “how do we build systems that make human judgment as powerful as possible?”

The Key Insight

Human pattern recognition isn’t a bug to work around or a limitation to overcome. It’s a feature to architect around.

The machine does what machines do well: scan millions of data points, find statistical similarities, retrieve precedents instantly, and operate tirelessly across enormous datasets.

The human does what humans do well: generate predictions, detect errors, feel which signals matter, recognize meaningful patterns, interpret context, make situated judgments, validate signal versus noise, and bring the weight of lived experience to bear on ambiguous situations.

The winning architecture treats both as components of a coupled system, not as separate entities where one serves the other.

The rep who uses this kind of system maintains agency, builds real expertise, and responds authentically to every situation — while having access to pattern recognition capabilities that would take twenty years to develop organically. They aren’t being replaced by AI. They’re being amplified by it.

In the end, it may be judgment that matters most. Not the artificial kind. The deeply, irreducibly human kind — grounded in a body that has been in the world, encoded its consequences, and learned to feel what matters.

And that’s not going anywhere.

Footnotes & Sources

¹ Damasio, A. R. (1994). Descartes’ Error: Emotion, Reason, and the Human Brain. G.P. Putnam. Damasio introduces the somatic marker hypothesis, demonstrating through clinical cases that patients with damage to the ventromedial prefrontal cortex retain full analytical capabilities but lose the ability to make sound decisions — because rationality requires emotional input. https://www.penguinrandomhouse.com/books/299019/descartes-error-by-antonio-damasio/

² Pollan, M. (2025). A World Appears. Penguin Press. Pollan explores the Bayesian Brain hypothesis and the predictive nature of perception, including the bear/boulder illustration of how the brain generates predictions and updates them against incoming sensory data. https://michaelpollan.com/books/a-world-appears/

³ Boyd, J. R. (1987). A Discourse on Winning and Losing. Unpublished briefing. Boyd’s OODA loop (Observe, Orient, Decide, Act) describes competitive advantage as the ability to update one’s internal model of a situation faster than one’s adversary — the “Orient” phase being where human cognition excels. https://en.wikipedia.org/wiki/OODA_loop

⁴ Klein, G. A. (1998). Sources of Power: How People Make Decisions. MIT Press. Klein’s foundational work on Recognition-Primed Decision making (RPD), based on studies of firefighters, military commanders, and other experts making high-stakes decisions under time pressure. Demonstrates that experts recognize patterns and act rather than analytically comparing options. https://mitpress.mit.edu/9780262611466/sources-of-power/

⁵ Kahneman, D. (2011). Thinking, Fast and Slow. Farrar, Straus and Giroux. Kahneman’s dual-process theory describes System 1 (fast, automatic, intuitive) and System 2 (slow, deliberate, analytical) thinking, and the conditions under which each dominates decision-making. https://us.macmillan.com/books/9780374533557/thinkingfastandslow

⁶ Gigerenzer, G. (2007). Gut Feelings: The Intelligence of the Unconscious. Viking. Gigerenzer’s research on ecological rationality demonstrates that simple heuristics — fast, frugal decision rules tuned to the structure of the environment — frequently outperform complex statistical models in uncertain real-world conditions. https://www.penguinrandomhouse.com/books/298863/gut-feelings-by-gerd-gigerenzer/

⁷ Kahneman, D., & Klein, G. (2009). “Conditions for Intuitive Expertise: A Failure to Disagree.” American Psychologist, 64(6), 515–526. A landmark paper in which two often-opposing thinkers agree: expert intuition is reliable when the environment has regular, learnable patterns and the expert has had sufficient practice with feedback. https://psycnet.apa.org/record/2009-13007-001

⁸ Simon, H. A. (1957). Models of Man: Social and Rational. Wiley. Simon’s concept of bounded rationality recognizes that human decision-making is shaped by cognitive constraints — limited working memory, attention, and processing capacity — and that effective systems must work within these constraints rather than assuming unlimited rational capacity. https://en.wikipedia.org/wiki/Bounded_rationality

⁹ Gibson, J. J. (1979). The Ecological Approach to Visual Perception. Houghton Mifflin. Gibson’s affordance theory describes how objects in an environment naturally suggest their use — a doorknob affords turning — without requiring explicit instruction. Applied here to system design: show what can be done, rather than prescribing what should be done.

¹⁰ Klein, G. (2013). Seeing What Others Don’t: The Remarkable Ways We Gain Insights. PublicAffairs. Klein’s exploration of how experts not only recognize known patterns but discover new ones — how insight works as a cognitive process, and how exposure to rich contextual information accelerates the development of expertise.